Sunday 28 August

from 14:00 CEST until 12:00 noon on Monday 29 August

Mélia Roger & Eric Larrieux

Air Listening Station

The Air Listening Station is an ongoing sonic exploration created by sound artists and researchers Mélia Roger and Eric Larrieux that is focused on raising awareness of declining air quality in modern urban landscapes. Through the use of sonification (the process of using non-speech audio to convey information or perceptualize data) of air quality data, this project aims to create actionable emotional knowledge which will hopefully inspire societal change.

In this broadcast, 22 hours of field recordings and interviews are transformed into an ever-evolving soundscape by means of a generative granular synthesis engine developed in the SuperCollider audio programming language. The algorithm takes air quality data provided by a nearby mobile measurement station from the Air Quality Lab of the department of Umwelt- und Gesundheitsschutz Zürich (Environmental and Health Protection department of Zurich) from the same time period as the audio source material. Specifically, the measure of all inhalable particles smaller than 10 and 2.5 microns in diameter are used to control the two primary parameters of the granular synthesis engine, grain duration and density, respectively.

The “real-time” nature of the sonification process means that the data typically varies quite slowly (with significant changes often occurring on the order of hours), and as such, it is necessary to include more generative elements to regulate this otherwise deterministic algorithm. Digital Signal Processing techniques are used to derive additional information from the data regarding the local temporal variability of the data (medium- and short-term rate of change via standard deviation and a peak detector, respectively); this data is then mapped to variables controlling random variations in granular synthesis parameters such as grain pitch and source position from within the field recording.

The result of this process is a soundscape where good air quality generally leads to more or less normal playback of the field recordings with slow/minimal variations, while poor air quality, on the other hand, causes more extreme (and rapidly changing) transformations of the underlying source material, thus creating abstract and often haunting, yet somehow familiar sonic textures. The generative nature of the process means that there are nearly infinite ways that bad air quality can sound, nonetheless, such conditions should typically be identifiable via the resulting increasingly drastic departures from the original recordings. This strategy aims to strike a balance between often uninteresting, direct representations of scientific data and more aesthetically pleasing ones where the underlying information tends to be completely obscured - and by doing so, elicit a lasting emotional response from the listener.

You will hear the voices of the technicians of the UGZ (Umwelt- und Gesundheitsschutz Zürich - Environmental and Health Protection department of Zurich), describing the particles and their impact on the air quality. We thank the UGZ for their support and collaboration in this project.

UGZ sensor station

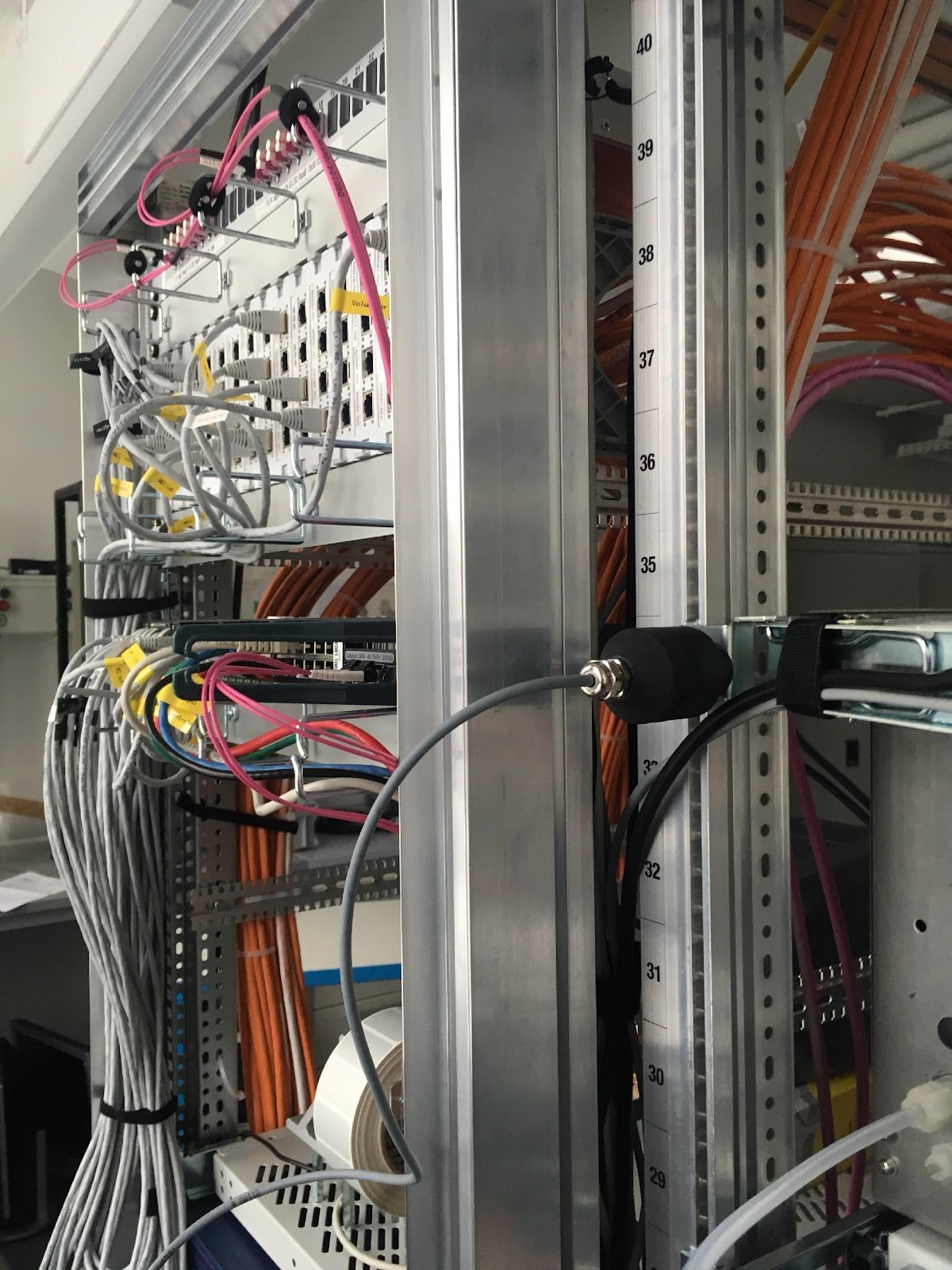

Inside of sensor station

Sensor location in Zurich

Mélia Roger (*1996, France) is a sound artist and sound designer for film and installations. She received the Pierre Schaeffer prize for her piece "Birds and wires" at the Phonurgia Nova festival. Her work explores the sonic poetics of the landscape, through field recordings and active listening performances. Focusing on human non-humans relations, she tries to inspire ecological change with environmental and empathic listening. The Air Listening Station is her first collaboration including sonification of scientific datas.

Composer, electrical engineer, educator, and creative technologist Eric Larrieux earned his BS in Electrical Engineering from Boston University in 2004, MS in Electrical Engineering from Northeastern University in 2009, and CAS in Computer Music and MA in Electroacoustic Composition from Zürcher Hochschule der Künste (ZHdK) in 2018 and 2021, respectively. He is currently employed as a research associate studying the intersection of art and technology at the Immersive Arts Space as well as at the Institute for Computer Music and Sound Technology, both at ZHdK. His professional background lies predominantly in the fields of signal processing R&D, sensor and system integration, data science, and robotics. Beyond music composition, he works on the topics of AI and machine learning, physical computing, 3D audio, artistic installations, and live electronics.